The Interview Consistency Problem No One Talks About

If you’ve ever been part of a hiring panel, you’ve probably faced this moment:

Two interviewers talk about the same candidate, and somehow, it feels like they interviewed two different people.

One thinks the candidate was brilliant.

The other says, “I’m not sure they can handle the role.”

That disconnect doesn’t come from differing opinions; it comes from differing criteria.

When interviews aren’t standardized, every candidate is judged through a slightly different lens, and that’s where inconsistency creeps in.

A candidate evaluation form (or interview scorecard) is designed to fix that.

But what separates a “good” one from a mediocre checklist? And how do you build one that’s simple, fair, and scalable?

Let’s understand it, step by step.

Why You Need a Good Scorecard in the First Place

Before we talk structure, it’s worth asking: why do scorecards matter so much?

A well-built scorecard doesn’t just document opinions, it translates observations into evidence.

It ensures every candidate is evaluated using the same yardstick, making the hiring process more fair, efficient, and data-driven.

Without one, interviews become an art form; with one, they become a repeatable framework.

A great scorecard should help you:

- Capture measurable insights from every interviewer.

- Compare candidates objectively.

- Align recruiters and hiring managers around the same definition of “qualified.”

- Build a record of consistent, defendable hiring decisions.

In short, it replaces gut instinct with clarity.

The Anatomy of a Great Candidate Evaluation Form

Let’s break down what makes a candidate evaluation form actually work in practice, the kind that doesn’t just collect feedback but builds consistency across every hiring decision.

1. Clear, Role-Specific Parameters

The quickest way to weaken a hiring process is to treat every role the same.

Generic scorecards often fail because they try to apply one framework to everyone, whether you’re hiring a software engineer or a customer success manager.

A good scorecard, on the other hand, begins with a simple question:

“What does success look like in this role?”

For a software engineer, the parameters might be:

- Technical proficiency

- System design

- Problem-solving

- Communication

- Cultural fit

Whereas a customer success role might focus on:

- Empathy

- Ownership

- Relationship management

But defining parameters isn’t enough, they need structure.

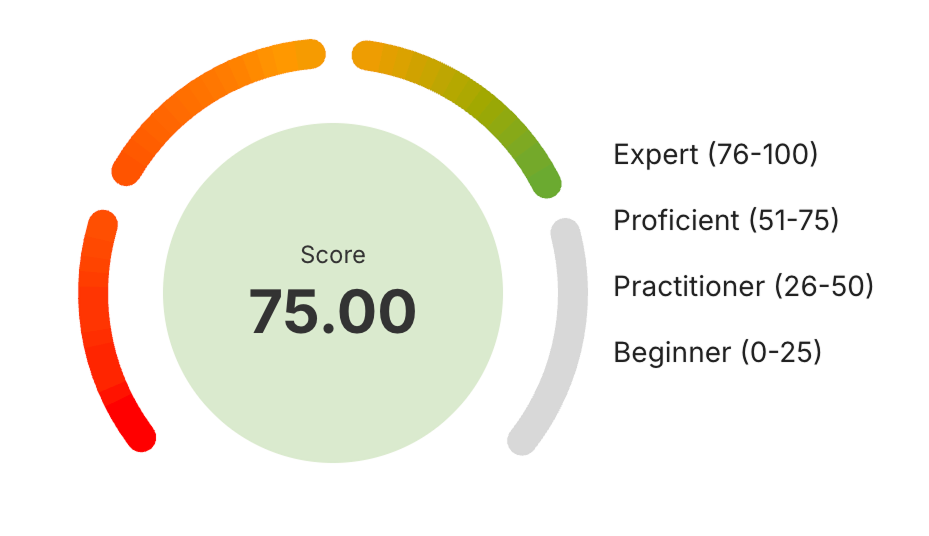

Each should have a measurable scale (like Beginner–Expert or 1–5) with clear behavioral anchors explaining what each level looks like.

That’s how “4/5 in communication” becomes a real signal, not a guess.

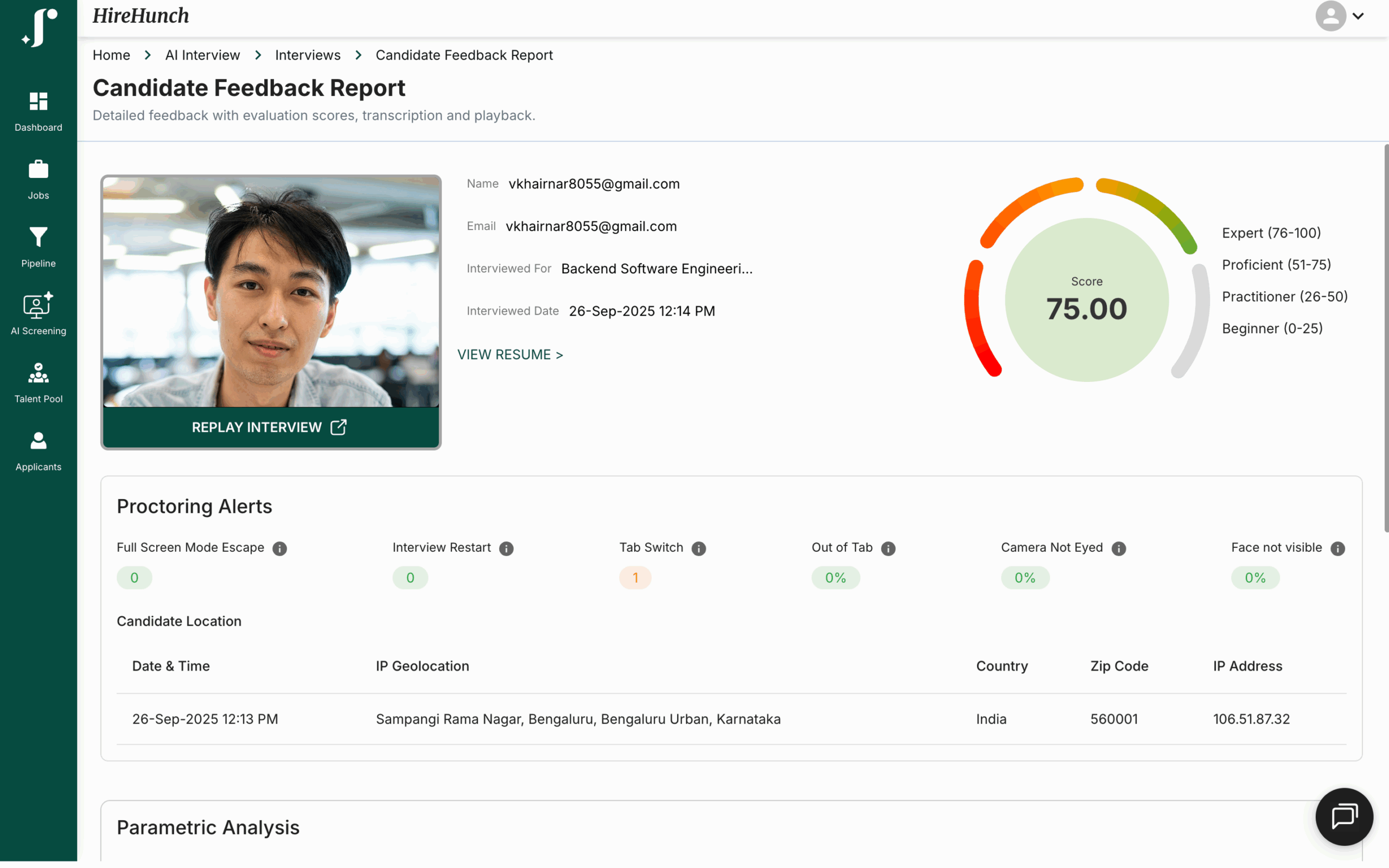

With JusRecruit, this kind of structure happens naturally. During an AI Interview, every question is mapped to a parameter, and every response is scored against a consistent rubric.

Instead of arbitrary notes, you see data-backed proficiency bands across multiple skills, giving you both context and confidence in each decision.

2. A Structured Yet Flexible Format

A good interview assessment form gives direction without dictation.

Too rigid, and interviewers lose their judgment. Too open-ended, and you lose consistency.

The balance lies in a structure that standardizes what’s being evaluated while allowing space for real human observation.

Think of it as a framework that says,

“Here’s what to assess, but tell us how it showed up.”

With JusRecruit, every interview is built this way.

The AI interview keeps structure intact, asking the same core questions across candidates, while also capturing the nuance of each conversation.

Each interaction is automatically logged and summarized, combining structured evaluation with natural dialogue.

Interviewers no longer need to take extensive notes; they get a complete, organized transcript with key insights already surfaced, making post-interview feedback faster and far more objective.

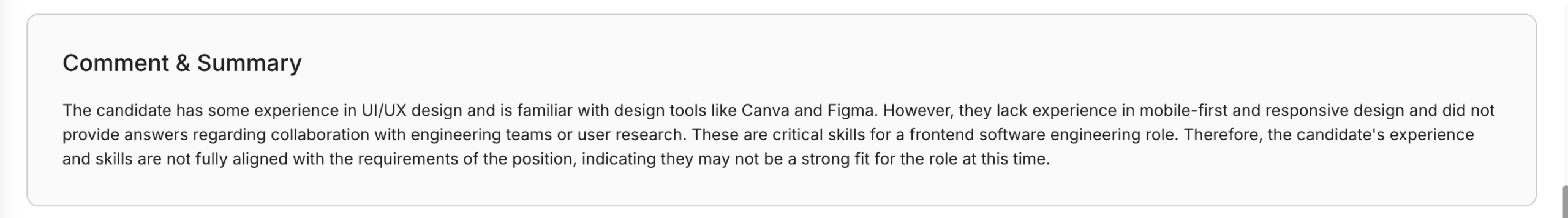

3. A Mix of Quantitative and Qualitative Evaluation

The best candidate evaluation sheet never relies on numbers alone.

Scores tell you how well a candidate performed, but comments tell you why.

A great scorecard blends both:

- Quantitative: measurable ratings that highlight strengths and weaknesses across competencies.

- Qualitative: short, contextual notes that explain what led to those ratings.

For instance:

“Candidate demonstrated strong understanding of message queues and system design patterns but struggled to articulate trade-offs.”

That combination turns raw data into insight, something both recruiters and hiring managers can trust.

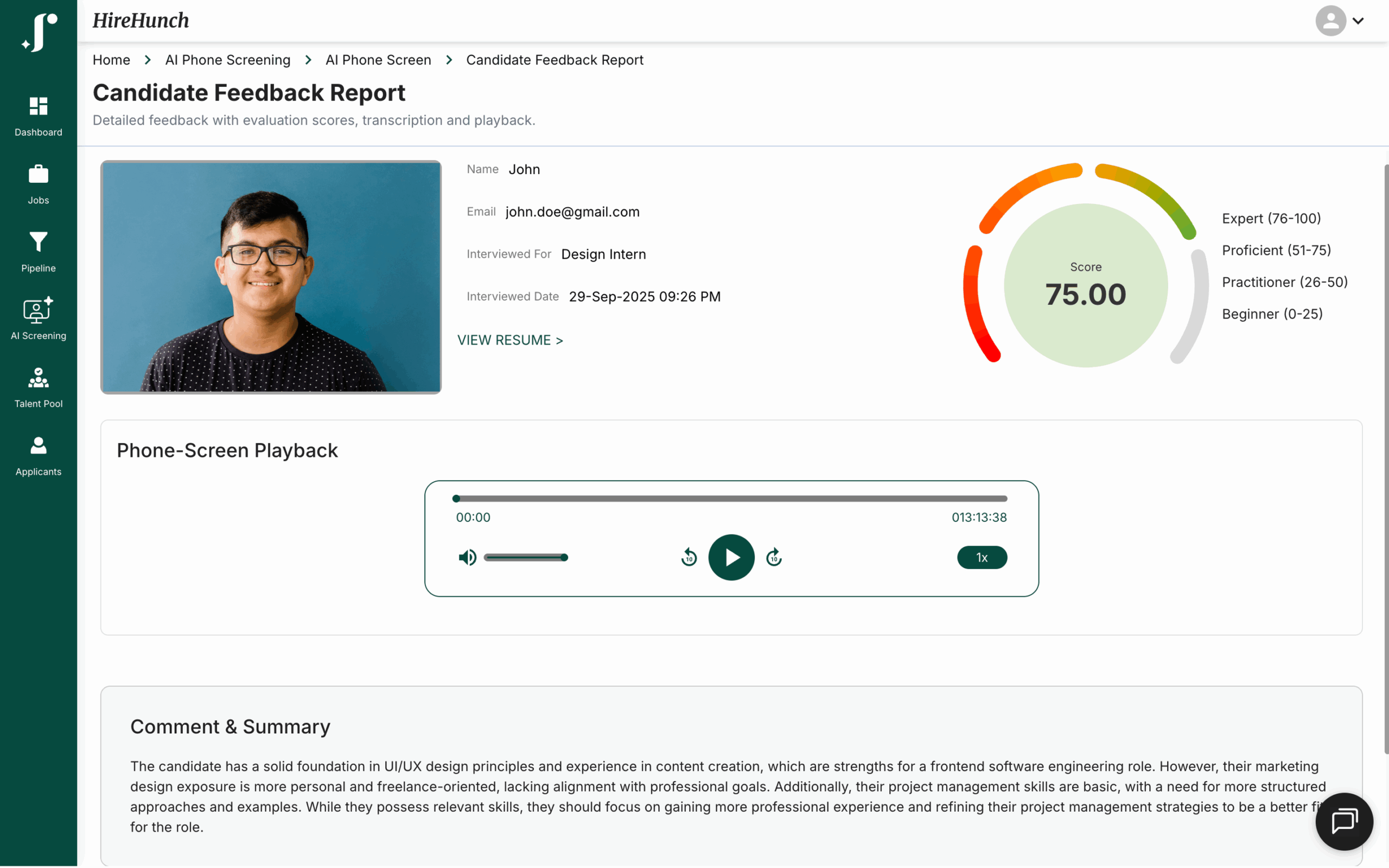

In JusRecruit’s AI Phone Screen, this blend is built in.

The system not only generates a proficiency score but also writes a concise summary of why the candidate scored that way.

You see the metrics, but also the reasoning, helping your team move from intuition to evidence, without losing the human perspective.

4. Built-In Consistency and Bias Control

Even the most seasoned interviewers can carry unintentional bias, it’s simply human nature.

That’s why structure is so important: it’s not about restricting judgment but ensuring it’s applied consistently.

When every interviewer evaluates candidates on the same parameters using a shared candidate evaluation form, you eliminate the noise of personal preference or recency bias.

Consistency deepens even further with structured interviews, where every candidate is asked the same questions in the same sequence.

This uniformity allows your scorecard to capture truly comparable data, making decisions more about evidence and less about intuition.

With JusRecruit, consistency is built into the process. The AI Phone Screen standardizes early evaluation by asking identical, role-based questions and scoring each response through the same rubric. Every candidate’s performance is measured on equal footing, ensuring fairness from the very first interaction.

5. Clarity in Scoring Scales

A hiring scorecard should never leave anyone wondering what a “good” score means.

Ambiguous ratings like Poor, Average, Excellent make hiring subjective again — and that’s exactly what a scorecard is meant to prevent.

The solution lies in defined proficiency bands — for instance:

- Beginner (0–25)

- Practitioner (26–50)

- Proficient (51–75)

- Expert (76–100)

This structure doesn’t just standardize ratings; it visualizes competence.

Recruiters can instantly tell how a candidate compares within a cohort, not just who performed well, but who’s truly ready for the role.

In JusRecruit, this clarity shows up through visual gauges and color-coded scoring rings that map every candidate’s proficiency. At a glance, you can see skill strength, confidence range, and readiness, all quantified, consistent, and easy to interpret.

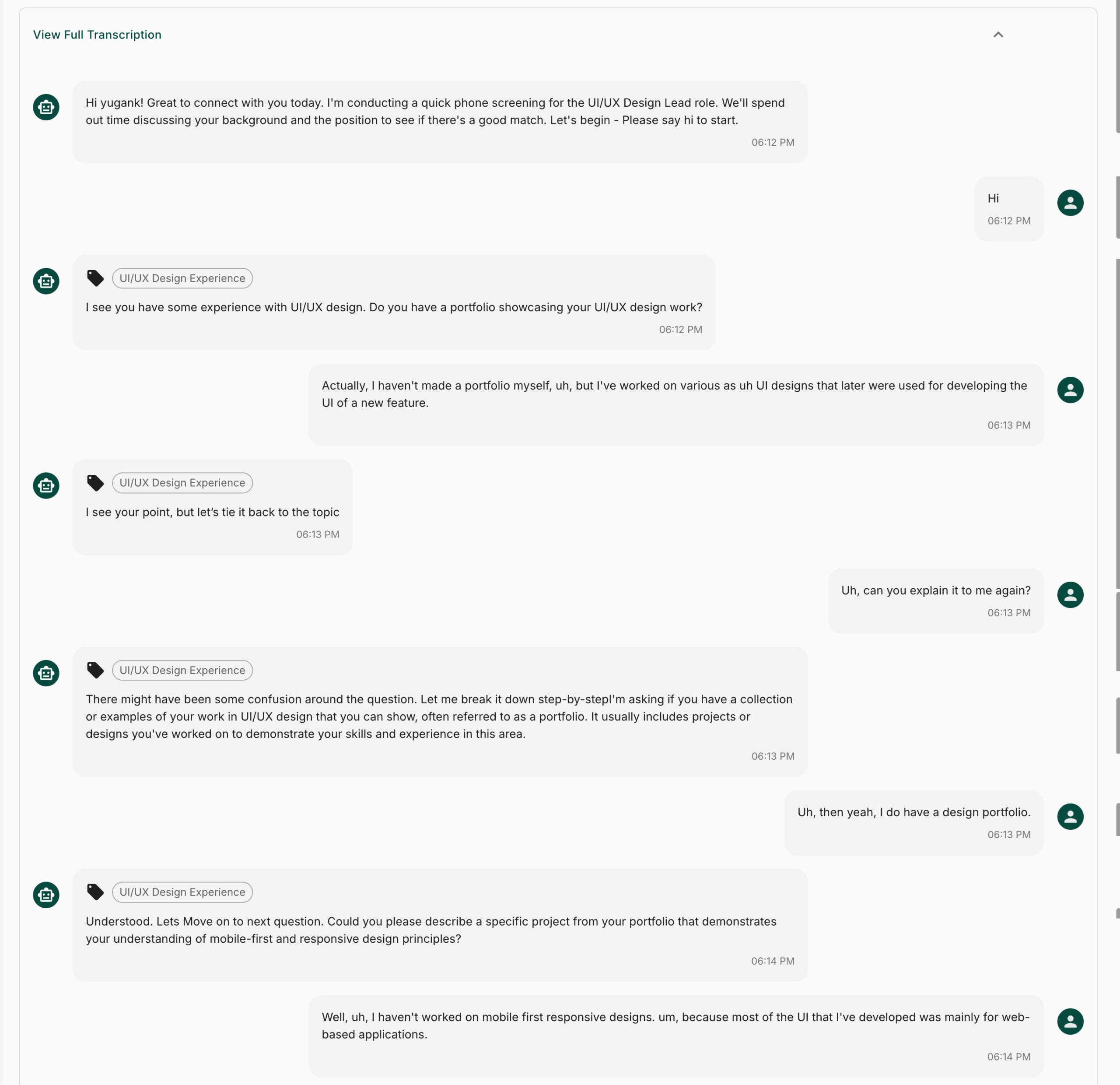

6. Evidence Over Opinion

Great hiring decisions aren’t made on gut instinct, they’re made on proof.

A strong interview scorecard template ensures that every score comes with supporting evidence, creating a transparent trail that hiring teams can review and trust.

Each evaluation should show not just what was scored but why, including:

- The exact question asked

- The candidate’s response

- The skill or competency assessed (e.g., System Design, Technical Skill Proficiency)

- The evaluator’s contextual notes

With JusRecruit’s AI Interview, this happens seamlessly. Each interview generates a complete Q&A transcript, tagged by skill category and timestamped for traceability. Recruiters can open any score and see the exact reasoning behind it, no assumptions, no blind spots.

7. Transparency and Accountability

The hallmark of a good interview evaluation system isn’t just efficiency, it’s transparency.

When everyone involved in hiring can review the same evidence, alignment becomes effortless.

That means:

- Every interview can be replayed or reviewed.

- Every piece of feedback is backed by transcripts, not recollection.

- Every decision is based on what was actually said, not what was remembered.

This kind of visibility doesn’t just benefit hiring teams, it benefits candidates, too.

When interviews are fair, structured, and traceable, trust in the process grows on both sides.

With JusRecruit, this transparency comes alive. Whether it’s a phone screen playback or a recorded AI interview, every conversation is preserved with transcripts, insights, and scorecards, ensuring accountability without friction.

8. Automation That Doesn’t Remove the Human Element

Automation in hiring shouldn’t replace people; it should empower them.

A candidate evaluation template that integrates structured automation, say, automatically generating summaries or analyzing transcripts, saves hours of manual effort while keeping decision-making human.

The best systems do exactly that: they take care of the repetitive parts (like summarizing, scoring, and data organization) and leave room for human judgment.

How to Build Your Own Candidate Evaluation Form

Here’s a practical, repeatable process you can use to create a candidate evaluation form that works for your team:

Step 1: Define the Role Competencies

Start by identifying the top 4–6 skills or traits that determine success in the role.

These will form the pillars of your evaluation criteria.

Step 2: Create a Scoring Rubric

For each competency, define what “Beginner,” “Practitioner,” “Proficient,” and “Expert” look like.

This ensures every interviewer knows what to look for.

Step 3: Add Structured Questions

Build your interview around questions that map to each competency.

This makes your structured interviews measurable and consistent.

Step 4: Include Notes and Evidence Fields

Add space for observations, examples, or quotes from the candidate.

You can even attach recordings or transcripts to your interview assessments for context.

Step 5: Calibrate and Iterate

After a few interviews, compare feedback across interviewers.

If discrepancies arise, refine your parameters or scales.

Over time, you’ll have a hiring scorecard that feels objective, predictable, and truly useful.

Why a Scorecard Is More Than Just a Form

A good scorecard isn’t paperwork.

It’s a decision-making framework, one that protects hiring teams from bias, inconsistency, and “gut-feel” decisions.

It’s also one of the most effective ways to scale culture.

When every new hire is evaluated on the same core criteria, you start building teams with consistent strengths, values, and capabilities.

And when you can back every “yes” or “no” with structured reasoning, hiring becomes less about opinion, and more about evidence.

Wrapping Up

If you’re struggling with inconsistent interviews, slow decisions, or subjective feedback loops, your scorecard might be the quiet culprit.

A well-designed candidate evaluation form changes that.

It transforms your process from reactive to reliable.

When your hiring teams work with structure, transparency, and fairness, every decision starts to feel confident.

And when that happens, consistency isn’t just a hiring metric anymore, it becomes your competitive edge.